Richard L. Hudson (Rick) is best known for his work in memory management including the invention of the Train, Sapphire, and Mississippi Delta algorithms as well as GC stack maps which enabled garbage collection in statically typed languages like Java, C#, and Go. He has published papers on language runtimes, memory management, concurrency, synchronization, memory models and transactional memory. Rick is a member of Google’s Go team where he is working on Go’s GC and runtime issues.

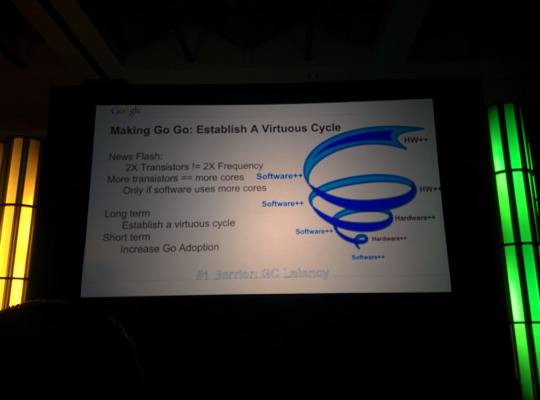

In economics, there is this concept of a virtuous cycle – a positive feedback loop between different processes that feed into one another. Traditionally in tech, there has been a virtuous cycle between software and hardware development. CPU hardware improves, which enables faster software to be written, which in turn drives further improvements in CPU speed and compute power. This cycle was healthy until about 2004, which is about when Moore’s Law started to end.

|

还没有人翻译此段落 |

These days, 2X transistors != 2x faster programs. More transistors == more cores, but software has not evolved to be able to fully utilize more cores. Because software today is not able to adequately put multiple cores to work, the hardware guys are not going to keep putting more cores in. The cycle is sputtering. A long term goal of Go is to reboot this virtuous cycle by enabling more concurrent, parallel programs. In the shorter term, we need to increase Go adoption. One of the biggest complaints with the Go runtime right now is that GC pauses are too long. When their team initially took on this problem, he jokingly says that as engineers, their initial reaction was to not actually solve the problem, and to look for workarounds like:

But Russ Cox shot these ideas down for some reason, so they decided to roll up their sleeves and actually try to improve the Go GC. The algorithm they developed trades program execution throughput for reduced GC latency. Go programs will get a little bit slower in exchange for ensuring lower GC latencies. |

还没有人翻译此段落 |

How can we make latency tangible?

So how much GC can we do in a millisecond? Java GC vs. Go GC

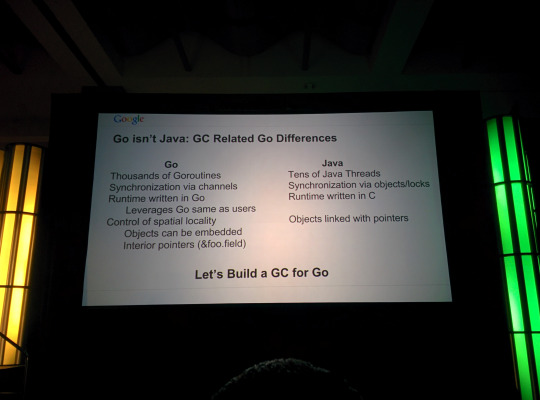

Go:

Java:

The biggest difference is the issue of spatial locality. In Java, everything is a pointer, whereas Go enables you to embed structs within one another. Following pointers many layers deep causes a lot of issues for a garbage collector. |

还没有人翻译此段落 |

GC basics

Here’s a quick primer on garbage collectors. They typically involve 2 phases:

|

还没有人翻译此段落 |

Go GC

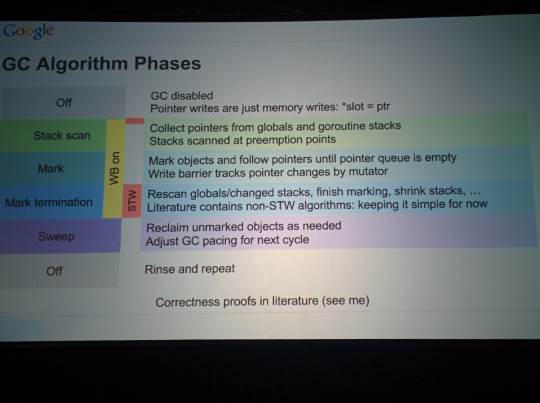

The Go GC Algorithm uses a combination of write barriers and short stop-the-world pauses. Here are its phases:

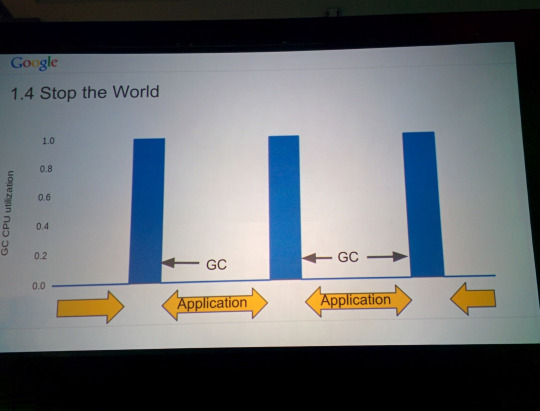

Here’s what the GC algorithm looked like in Go 1.4:

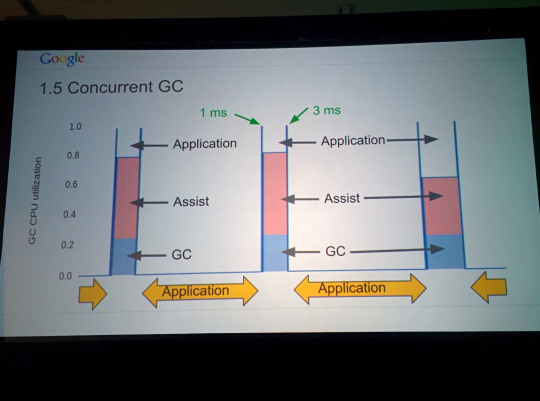

Here it is in Go 1.5:

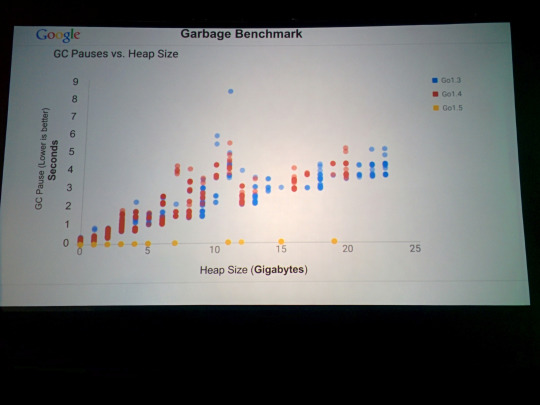

Note the shorter stop-the-world pauses. During concurrent GC, the GC uses 25% CPU. Here are the benchmarks:

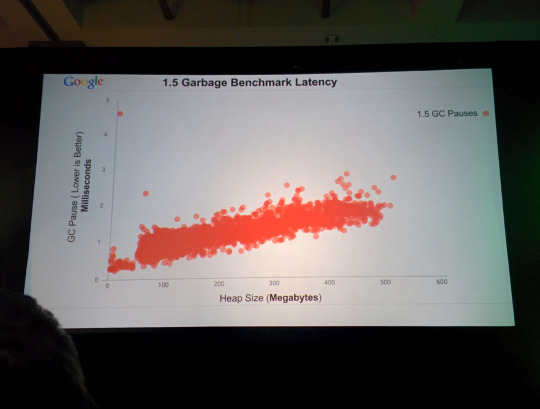

In previous versions of Go, GC pauses are in general much longer, and they grow as the heap size grows. In Go 1.5, GC pauses are more than order of magnitude shorter. Zooming in, there is still a slight positive correlation between heap size and GC pauses. But they know what the issue is and it will be fixed in Go 1.6.

There is a slight throughput penalty with the new GC algorithm, and that penalty shrinks as the heap size grows:

|

还没有人翻译此段落 |

Moving forward

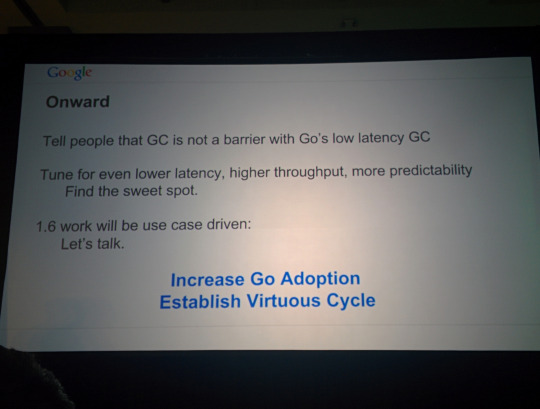

Tell people that GC is no longer an issue with Go’s low latency GC. Moving forward, they are planning to tune for even lower latency, higher throughput, and more predictability. They want to find the sweet spot between these tradeoffs. Development work for Go 1.6 will be use case and feedback driven, so let them know.

The new low latency GC will make Go an even more viable replacement for manual-memory-management languages like C. Q & AQ: Any plans for heap compaction? A: Our approach has been to adopt the techniques that have served the C language community well, which is to avoid fragmentation to begin with by storing objects of the same size in the same memory span. |

还没有人翻译此段落 |

我们的翻译工作遵照 CC 协议,如果我们的工作有侵犯到您的权益,请及时联系我们

有疑问加站长微信联系(非本文作者)